It is estimated that cybercrime costs $10.5 trillion. AI systems may possibly be impacted by these attacks. These systems use a great deal of data. This includes private information like browsing habits.

All throughout the world, governments have passed strict data privacy laws like the CCPA. They have also passed GDPR. For businesses building or deploying AI systems, they must comply with it. Moreover, non compliance can result in legal action.

This guide will address the importance of data privacy in AI. We will also discuss the consequences of these rules for businesses and the difficulties in complying with them.

Why Data Privacy Matters in AI?

Sensitive Data Protection

AI systems frequently process sensitive information, such as:

- Personally Identifiable Information

- Financial data

- Health records

- Behavioral data

If mishandled, such information could be exposed in data breaches. It may also result in financial fraud. As a result, safeguarding this data is not only required by law but also by ethics.

Building Customer Trust

Users are more inclined to engage with AI solutions if they believe that their data is being handled properly. However, a brand’s reputation may be swiftly damaged by a single privacy infraction. For example, when companies misuse or overcollect data without giving enough information, customers feel deceived and quickly lose loyalty. Hence, respecting privacy builds long term trust and ensures customer retention.

Preventing Bias

Biased AI models might result from poor data management. For example, an AI powered employment tool may discriminate against some groups if it carelessly utilizes demographic data or personal identification. Therefore, it results in discriminatory practices. Additionally, appropriate data privacy practices include anonymization and reducing superfluous data. It lessens the possibility that prejudice will infiltrate AI decisions.

Transparency

Since AI systems are frequently black box models, it may be challenging to describe how they form opinions. Furthermore, people have a right to know how automated decision making uses their data. Furthermore, preserving transparency promotes responsibility for preserving business compliance.

Ethical AI Adoption

The acceptance of AI is determined by public opinion. Adoption resistance will also increase if people think AI violates their privacy or alters their personal information without their permission. Additionally, protecting data privacy guarantees ethical AI development.

GDPR

Most people agree that the most significant and effective data protection regulation in the world is the General Data Protection Regulation. GDPR was also implemented to address the vast volumes of personal data.

Along with guaranteeing that companies manage personal data securely, this rule also seeks to provide individuals more control over their data. Also, it’s unique since it applies to all those who handle the personal data of EU citizens.

For AI developers and companies, this means that any application handling EU citizens’ data. This can be for training machine learning models or delivering personalized services, and must comply with GDPR’s strict requirements.

Principles of GDPR

Purpose Limitation

Data can only be collected for specific and legitimate purposes. For example, if data is collected for improving a recommendation system, it cannot later be used for unrelated marketing without consent.

Data Minimization

Only the minimum data necessary for the stated purpose should be collected. This is especially critical in AI, where over collection of data can introduce compliance risks.

Accuracy

Organizations must keep personal data up to date and correct inaccuracies. In AI, using outdated or incorrect data can not only breach GDPR but also degrade model performance.

Storage Limitation

Personal information must not be retained for longer than is required. This entails scheduling training data retention and making sure that data is erased when it is no longer required for AI.

Integrity

Data must be shielded from abuse or unauthorized access. Strict access restrictions and encryption are crucial.

CCPA

The California Consumer Privacy Act is a data privacy law. It is also the most comprehensive privacy law to date. The GDPR safeguards Californians’ right to privacy even if it targets EU citizens.

Businesses creating or implementing AI solutions in the US, particularly those that gather or manage data from Californians, are required to understand and comply with the CCPA.

Principles of CCPA

Right to Know

Customers are entitled to know what information about them is being gathered and how it will be used. It also guarantees that it won’t be disclosed to outside parties. Additionally, companies need to make their privacy statements easy to find.

Right to Delete

Individuals can request that a business delete the personal data it has collected, with certain expectations such as cases where the data is necessary for completing a transaction or complying with legal complications.

Right to Opt Out of Sale of Data

One of the most significant principles is the consumer’s right to opt out of the sale of their personal data to third parties. Businesses must provide a clear opt out option on their websites.

Right to Non Discrimination

Companies are not allowed to treat customers unfairly when they exercise their right to privacy. Customers who choose not to provide their data cannot be refused services. Moreover, they cannot have their pricing changed unless the difference is tied to the value of their data.

Disclosure

CCPA emphasizes that businesses must maintain transparency with consumers. Moreover, this includes providing updated privacy policies. They also have to ensure that it has easily accessible communication channels for inquiries.

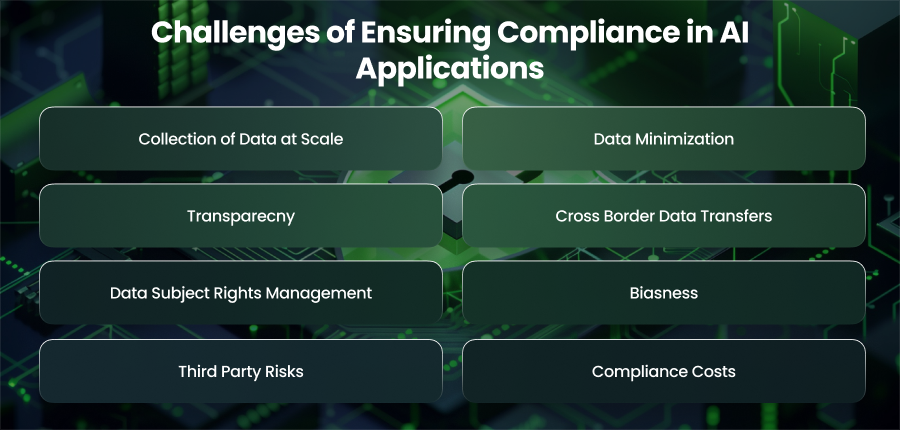

Challenges of Ensuring Compliance in AI Applications

Collection of Data at Scale

Large datasets are crucial to the training and development of AI systems’ models. Additionally, this implies that companies frequently have to collect information from several sources. This includes customer interactions and behavioral patterns. This ensures that all collected complies with consent rules and lawful processing requirements can be overwhelming, especially when dealing with global datasets that can fall under multiple jurisdictions.

Data Minimization

Data minimization, which mandates that businesses gather just the information required for a particular purpose, is a fundamental tenet of privacy rules. Furthermore, AI works better with datasets that are more varied. Because developers must balance model performance with legal requirements, this inherent conflict makes compliance difficult.

Transparecny

GDPR emphasizes the right to explanation, meaning individuals can ask how decisions affecting them were made by AI systems. However, many AI models, make it difficult to trace decision making processes. Moreover, meeting transparency requirements while using complex AI models remains one of the toughest compliance obstacles.

Cross Border Data Transfers

Cloud infrastructure is widely used by AI systems to analyze data from several locations. However, data protection regulations significantly limit cross border transfers of personal information. GDPR mandates protections when transferring data outside of the EU. Therefore, it may be logistically challenging to achieve these expectations without compromising AI’s effectiveness.

Data Subject Rights Management

Both these regulations grant individuals rights such as data access and correction. For AI systems that rely on historical data, accommodating requests to delete or modify individual records without affecting model performance is challenging. In some cases, retraining models to remove deleted data can be required, which is resource intensive.

Biasness

The goal of privacy laws is to ensure equity as well as the protection of personal data. Furthermore, a privacy violation may occur if AI algorithms trained on skewed datasets provide prejudiced findings. Thus, preserving nondiscrimination and fairness while dealing with big databases is a constant compliance issue.

Third Party Risks

AI applications often integrate with external APIs or cloud providers. Furthermore, the main business is still responsible if third parties handle data improperly or disregard compliance standards. Strong vendor management and compliance audits are, therefore, essential, but they also introduce another level of complication.

Compliance Costs

Compliance in AI isn’t just a legal requirement; it also comes with financial and operational costs. From implementing privacy by design principles to retraining models for deleted data. Therefore, these requirements can slow down innovation. Businesses often struggle to balance the drive for modern AI development with the resources needed for compliance.

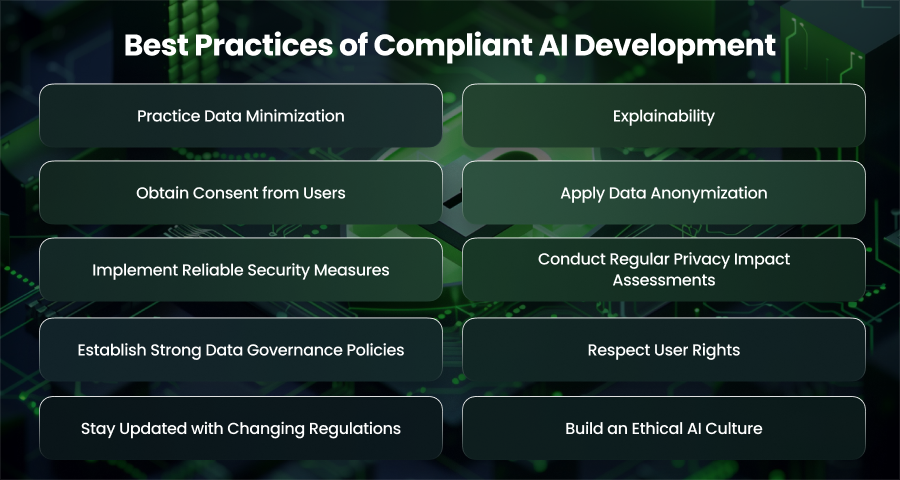

Best Practices of Compliant AI Development

Practice Data Minimization

Only gathering the data that is required is one of the most important parts of the CCPA and GDPR. Furthermore, a lot of AI models are trained on enormous datasets, but not all of the data is useful. Moreover, companies lower privacy concerns and lessen their compliance burden by identifying and utilizing only necessary data.

Explainability

Because AI systems are frequently black boxes, it is more challenging for users to comprehend how choices are made. Additionally, the GDPR highlights the right to an explanation. Moreover, CCPA requires companies to notify customers about how their data is used. AI developers need to create systems that can handle data transparently and give clear justifications for choices.

Obtain Consent from Users

Before collecting or processing personal data, organizations must secure clear consent from users. Hence, this means offering simple opt in mechanisms and avoiding vague or hidden disclosures in lengthy terms.

Apply Data Anonymization

To reduce the dangers of data abuse, organizations should, if possible, anonymize or pseudonymize personal information. Furthermore, pseudonymization substitutes codes for IDs that may be used only under specific circumstances, but anonymization permanently eliminates all identifiers. This makes it possible for AI systems to function effectively without endangering personal identities, which lessens legal issues under both regulations.

Implement Reliable Security Measures

Compliance requires robust technical measures in addition to policies. Companies must use the following strategies to safeguard data against breaches or illegal access:

- End to end encryption

- Multi factor authentication

- Regular penetration testing

- Access control policies

- Secure storage and transmission protocols

Conduct Regular Privacy Impact Assessments

Under GDPR, AI initiatives should have regular Data Protection Impact Assessments. Additionally, these evaluations find any hazards and compliance gaps prior to adoption by looking at a system’s data collecting and processing procedures.

Establish Strong Data Governance Policies

Compliance requires a structured governance framework that defines how data is collected. Moreover, this involves:

- Creating clear data retention and deletion policies

- Documenting compliance procedures

- Ensuring third party vendors also follow privacy laws

Data governance builds accountability. It also strengthens compliance across the AI lifecycle.

Respect User Rights

Both of these regulations grant individuals specific rights, such as the right to access and restrict processing of their personal data. Moreover, AI systems must be designed to support these rights.

For instance, consumers should be allowed to opt out of data sales as required by the CCPA or request that their data be deleted without violating the AI model.

Stay Updated with Changing Regulations

Privacy laws are not static. New updates and regional laws continue to emerge. Businesses need to keep up with regulatory changes so they may modify their systems appropriately.

Build an Ethical AI Culture

Compliance is about building trust. As a result, companies ought to teach staff members to cultivate an ethical AI culture. Additionally, they ought to make consumer privacy a top priority.

Final Word

Data privacy in AI applications is mandated by law. Additionally, it is essential for trust. Therefore, companies may employ AI responsibly and safeguard user data by comprehending and putting best practices into effect.